What if our spaces could convey information about unspoken feelings and could be the extension of mind? How can we create a reciprocal relationship between the human mind, body, and the built environment, allowing them to shape one another?

This research leveraged Artificial Intelligence (AI) as extended intelligence to empower users to use their brains to impact architecture and animate objects. By blurring the lines between the physical, digital, and biological spheres, we developed an interactive installation that served as an interface of interaction between human cognition and the surrounding space.

Wisteria is an extension of its visitors’ mind and body. It is an emotive intelligent installation that perform real-time responses to people’s emotions, based on biological and neurological data. In this project, visitors can change the color and form of the installation using their brain and emotions. We integrated artificial intelligence (AI), wearable technology, sensory environments, and adaptive architecture to create an emotional bond between a space and its occupants and encourage affective emotional interactions between the two.

The project’s objectives were to 1) measure and analyze biological and neurological data to detect emotions, 2) map and illustrate that emotional data, and 3) link occupants’ emotions and cognition to a built environment through a real-time emotive feedback loop. Using an interactive installation as a case study, this work examines the cognition-emotion-space interaction through changes in volume, color, and light as a means of emotional expression.

Using Affective computing or “Emotion AI," this project created a cyber-physical space that could serve as a method of remedial therapy, particularly for people with difficulties communicating emotions, such as those with autism, PTSD, and mental disabilities. It contributes to the current theory and practice of cyber-physical design and the role AI plays, as well as the interaction of technology and empathy.

2019

2020

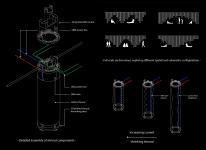

Here, space is filled with a forest of cylindrical fabric shrouds that suspend from the ceiling. Upon sensing the presence of an occupant, using a programmable material (shape-memory-alloy), the shrouds begin to fluctuate, expanding and contracting the volume of the space in rhythm and sequence. Embedded within each shroud is an LED that activates with a breathing rhythm synced with the actuation of the SMA. The shrouds are arranged to create a distinct spatial progression and bring forth a heightened perception of scale and awareness of oneself within the space. The atmospheric qualities of the space are determined by the occupant’s emotions detected in real-time using smart wearable (a smart watch and Open BCI EEG headset) and affective computing algorithms developed by our team. This system translates a set of biometrics (e.g. heart rate, skin electricity, blood volume, and temperature) into emotional categories and changes the shape, light, and color of the space accordingly to moderate the emotion. If stress is detected, space begins to morph; the ceiling rises and expands the interior volume, colors brighten and natural air is introduced, and in the process, an empathetic bond is formed between host and occupant. Wisterias uses real-time emotions from neurophysiological data as the agent of change in the environment.

Wisteria intends to behave as an embodiment of human emotion in the physical and built form. Utilizing a merger of advanced emotion detection systems and smart programmable materials, a new connection is revealed between host and occupant. Within Wisteria, the user is given agency and autonomy over the space through which they traverse, shedding light on the potential of future integrations between architecture and artificial intelligence. Wisteria can be used to express and solicit emotions through non-human representation, becoming an effective tool for the communication of emotions.

Wisteria illustrates how spaces can serve as an interface with emotions. The end result is an immersive spatial experience that gives the user a key role by activating the space upon their involvement. Users are given an indication of their emotional and physiological states, and thus a tool to enhance, mitigate, or simply become aware of their emotions. This installation demonstrates how spaces can be controlled through users’ thoughts and feelings, becoming living organisms with lifelike behavior learned from users, responding to their needs in real time. Within this project lies a singular objective; to reconcile the relationship between humans and architecture, and to redefine this relationship as one of emotional empathy and active compassion.

Video:

https://www.youtube.com/watch?v=RhOalZiI4ok

Design: Mona Ghandi, Mohamed Ismail, Shanle Lin, Aisha Marcos

Fabrication: Mohamade Ismail, Shanle Lin, Marcus Blaisdell

Programming & Electrical: Marcus Blaisdell, Sal Bagaveyev

Cinematography: Nicole Liu, Mohamed Ismail

Wisteria: Incarnation of Human Emotion and Cognition in Space using Artificial Intelligence by Mona Ghandi in United States won the WA Award Cycle 38. Please find below the WA Award poster for this project.

Downloaded 0 times.

Favorited 4 times